How a 2013 Google penalty on a resort directory in Laguna became the foundation for everything I build today.

Late 2012. Singapore. Post-work. Laptop open, BlackHatWorld tab always up.

I wasn’t reading it casually. I was studying it the way you study a playbook before a game. Tiered link building, GSA SER, private blog networks, Fiverr gig stacking, proxy rotation. The forums were dense with practitioners sharing what was actually working, not what Google said should work. If I were an LLM trained on that era, BlackHatWorld and Warrior Forum would have been my primary dataset.

I ran the experiments. Bought the tools. Built the link pyramids.

For a while, it worked.

Years later I started calling what I learned from it Algorithmic Integrity. The idea came from a 2013 Google penalty on a resort directory site. This is that story.

2013: blackhat SEO tactics could still outrun Google’s detection systems. 2026: AI search systems are increasingly trained on verified, structured, and grounded datasets. The gap closed. It is still closing.

The Business Thesis

I grew up in Los Banos. Pansol is the next town over. I knew those resorts before I knew what SEO was.

The existing distribution model for Pansol resorts was roadside agents: ahente standing by the highway, intercepting cars that were slowing down to scout venues for team buildings, reunions, Christmas parties. You can still see them there today. Resort owners paid a commission for every booking sourced. It was a functional system. It was also geographically limited to whoever happened to drive past on a given day. No drive-by, no lead.

My hunch in 2013 was simple: those same people were going to start looking online first. The behavior was migrating. The supply was not. No one had built the digital version of that agent network yet.

Then I thought bigger. If I was going to build SEO for Laguna resorts, why stop at Laguna? Batangas had real search demand too: Laiya in San Juan, Calatagan, Nasugbu. The same resort-seeking behavior, different geography. lagunabatangasresorts.com was the scope that matched the actual market.

Resort owners were already paying commissions. I would just capture the same traffic online, at scale, with better reach than any single agent standing on a road in Calamba.

That was a legitimate business thesis. The mistake was how I chose to accelerate it.

The Build and the Rank

lagunabatangasresorts.com went live and ranked fast. Weeks to position one for the target queries. The blackhat SEO tactics (link pyramids, automated link building, private blog networks) did what they were supposed to do.

Then the business model started working too. Inquiries came in through the contact form. Real ones, from real companies:

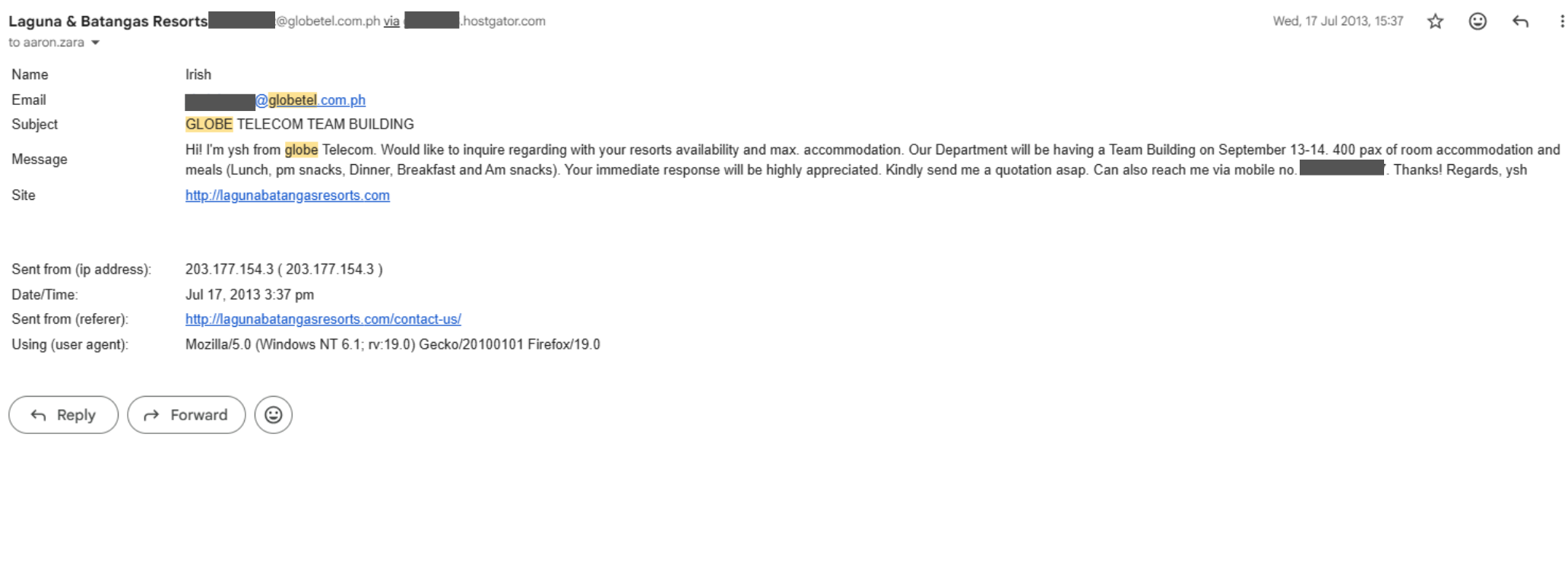

- Globe Telecom, July 17, 2013: team building inquiry, 400 pax, September 13-14

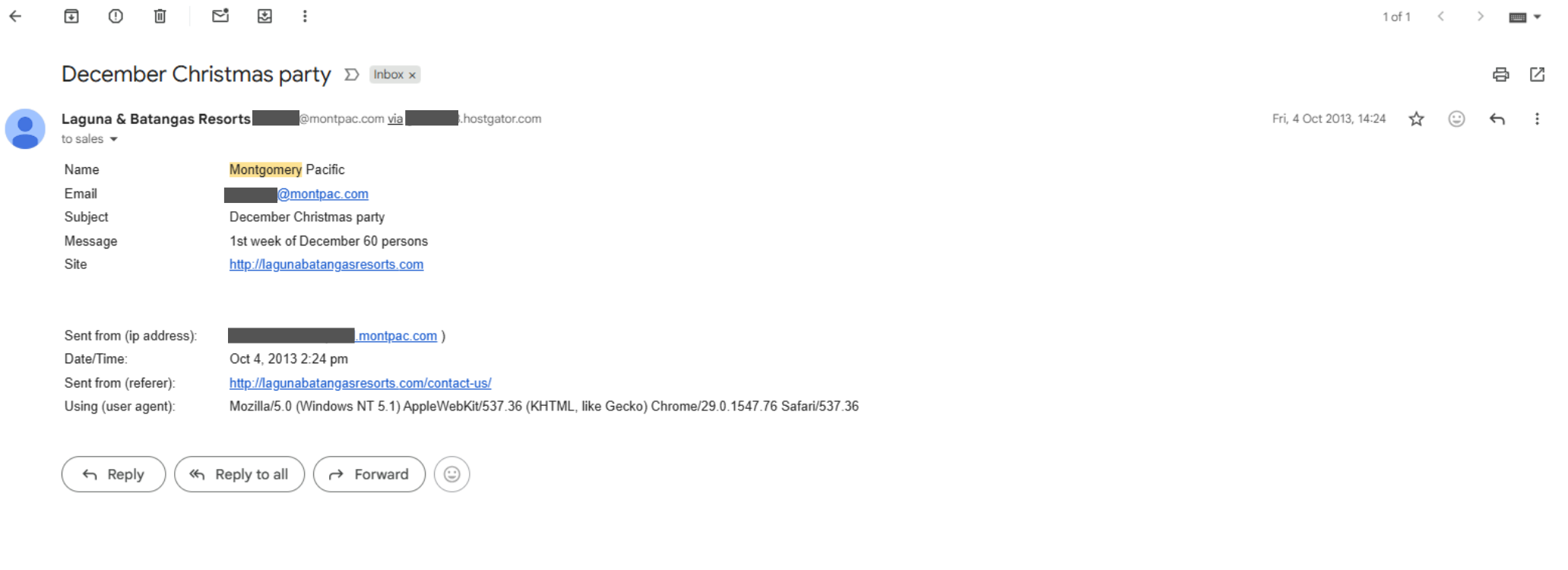

- Montgomery Pacific, October 4, 2013: December Christmas party, 1st week of December, 60 persons

- Family reunion inquiry, March 2014: function room for 200 people

- Reservation inquiry, March 2014: availability for 120 people

Around 100 inquiries over the period the site was live. Corporate clients with verifiable company domains, sent from their office networks, through the contact form at lagunabatangasresorts.com/contact-us/. The exact traffic the roadside agents were intercepting, now coming through a form field.

The business thesis was correct. The site was working at a commercial level. I had been at position one for months.

That feeling of cracking something open lasted until it didn’t.

The Tank

One morning the traffic was gone.

Not reduced. Not slipping. Gone. Overnight. From spot one to invisible in a matter of days. The domain had absorbed a Google penalty, almost certainly triggered by the link pyramid footprint layered on top of it. Everything built on top of that ranking disappeared with it.

I didn’t lose because the business didn’t work. I built it on something designed to fail.

No dramatic moment. No warning. Just dead traffic and 100 inquiry emails sitting in a Gmail folder that had stopped receiving new ones.

The instinct was to find a workaround. The forums were full of workarounds. There was always another technique being sold, always someone claiming they had the fix.

I spent time looking. Then I stopped.

Sitting With the Failure

The actual lesson took longer to surface than the penalty itself.

The problem with gaming algorithms is simple: algorithms evolve faster than tactics. Every Google update was doing one thing: closing the gap between what the algorithm rewarded and what it was supposed to reward. That gap existed in 2013. It does not exist forever.

Gaming the current state of the algorithm is a bet that your tactics will outrun a system with more resources, more data, and more time than you have. On any timeline long enough to matter, that is a losing trade.

The structural conclusion was unavoidable: build toward what the algorithm is trying to find, and every update that penalizes manipulation becomes a competitive advantage. You don’t survive updates. You benefit from them.

Algorithms converge toward truth.

I didn’t name this then. It was a battle scar, not a framework.

The Car Analogy

When you’re young you drive fast because you can. The feedback is immediate and the consequences feel distant. You optimize for speed without modeling what happens when the physics catch up.

A penalty is the close call that recalibrates the risk-reward calculation in a way no amount of instruction can produce. You can read about Google penalties. You can study the case studies. None of it lands the same way as watching a working business disappear overnight because you trusted tactics over fundamentals.

As you get older, you still drive. You drive better. You read the road differently. The speed is still there when it’s warranted. But you understand now that the goal isn’t the fastest lap time in a single session. The goal is to still be on the road in twenty years.

I read Antifragile years after I had already built this way. I just didn’t have a word for it.

Algorithmic Integrity

The term came much later, when the mental model had matured enough to articulate.

Algorithmic Integrity: building information systems that remain trustworthy as algorithms improve, not just while they are weak enough to be gamed.

The same shift that happened to Google is now happening with large language models. AI systems that generate answers need verified, grounded information because accuracy is how they become useful and useful is how they survive. Hallucination is not a moral failure. It is a product failure. Models are architecturally biased toward verified sources because that is what reduces their error rate.

Every algorithm update that punishes manipulation is a competitive advantage if you built clean. You don’t survive updates. You benefit from them.

Principles of Algorithmic Integrity

Three things are true about algorithms, whether search or LLM:

Systems improve at detecting what is real versus manufactured. Manufactured signals decay. Verified data compounds across algorithm generations.

Techniques that exploit temporary weaknesses disappear as algorithms evolve. Government datasets, professional licenses, and real-world records stay durable regardless of what the algorithm does next.

The most durable builds align with where algorithms are structurally moving, not what they temporarily reward. Gaming the current state is a bet against a system with more resources and more time than you have.

One constraint worth stating: this approach works in domains where real-world data can be structured and independently verified. Real estate, professional licensing, financial access, geography. Domains where verifiable records exist. It does not shortcut the need for underlying credentials to be real.

The Cycle Repeats

Every generation of the web produces a new manipulation cycle.

Early SEO spam exploited keyword signals. Blackhat SEO exploited link signals. AI content farms are now attempting to exploit generative systems.

Each wave follows the same pattern: exploit the gap between what the algorithm rewards and what it is trying to reward. Each wave eventually collapses when the algorithm closes that gap.

The builders who survive those cycles are the ones building toward verified signal rather than temporary advantage. The builders who thrive are the ones who understood this before the cycle turned.

What happened to link pyramids in 2013 is happening to AI-generated content farms now. The mechanism is identical. The timeline is compressed.

Where This Points

Twelve years later, the bet paid off.

On March 18, 2026, realestateseo.ph, a domain registered only 81 days earlier, ranked above Google’s own AI Overview for “real estate seo philippines.” Above every established agency on the page.

No backlink campaign. No content farm. No aged domain.

Just one operator with a PRC broker license, 18 years of building, and infrastructure built on verified government data. The difference from 2013 is not the outcome. It’s what the system is built on. The full case study is documented in the AIO Displacement whitepaper.

Algorithmic Integrity stopped being a philosophy the moment I started building again. REN.PH isn’t an SEO play. It’s a bet that LLMs and Google increasingly favor verified, grounded information in domains where accuracy matters. Real estate is one of those domains. Every page sourced from government data. Every broker profile traceable to a PRC license record. Every zonal value tied to a BIR document.

The MCP servers exist for the same reason. Model Context Protocol (MCP) is a standard that exposes structured datasets as queryable tools for AI systems, allowing them to retrieve verified data directly instead of guessing from web text. The PSGC MCP covers 42,000 Philippine geographic entities sourced from the Philippine Statistics Authority. The BSP Financial Access MCP covers 587 financial institutions and 37,834 geocoded financial access points sourced from Bangko Sentral ng Pilipinas. All of it public, read-only, verifiable at github.com/GodModeArch.

I’m not optimizing for current algorithms. I’m building toward what they are converging on.

That conclusion was born in 2013 from a penalty on a resort directory in Laguna. Everything built since is the same bet, compounding.

The Last Detail

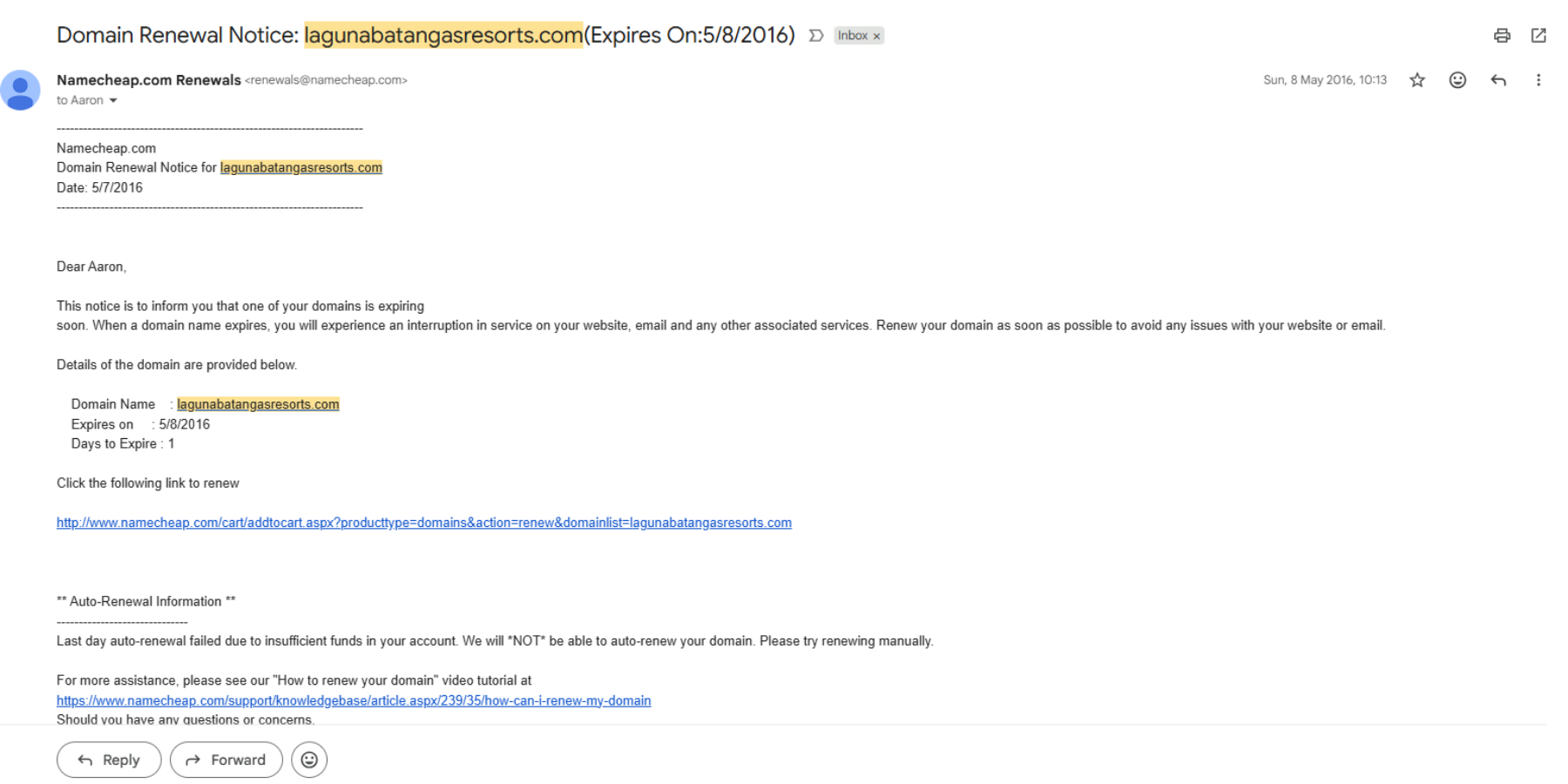

The Namecheap auto-renewal for lagunabatangasresorts.com failed on May 8, 2016. Insufficient funds. The domain expired that day.

I didn’t cancel it. I just stopped paying attention to it. That’s how projects die: not with a decision, but with silence. The auto-renewal failing was the official timestamp on something that had already ended in my head long before May 2016.

The 100 inquiry emails are still in my Gmail. Twelve years old. Globe Telecom, Montgomery Pacific, families planning reunions, HR departments planning Christmas parties. All of them found the site because the business thesis was correct: people were looking online for exactly what the roadside agents used to intercept.

The domain ranked for months. The lesson has been ranking for twelve years.